Your research,

entirely private.

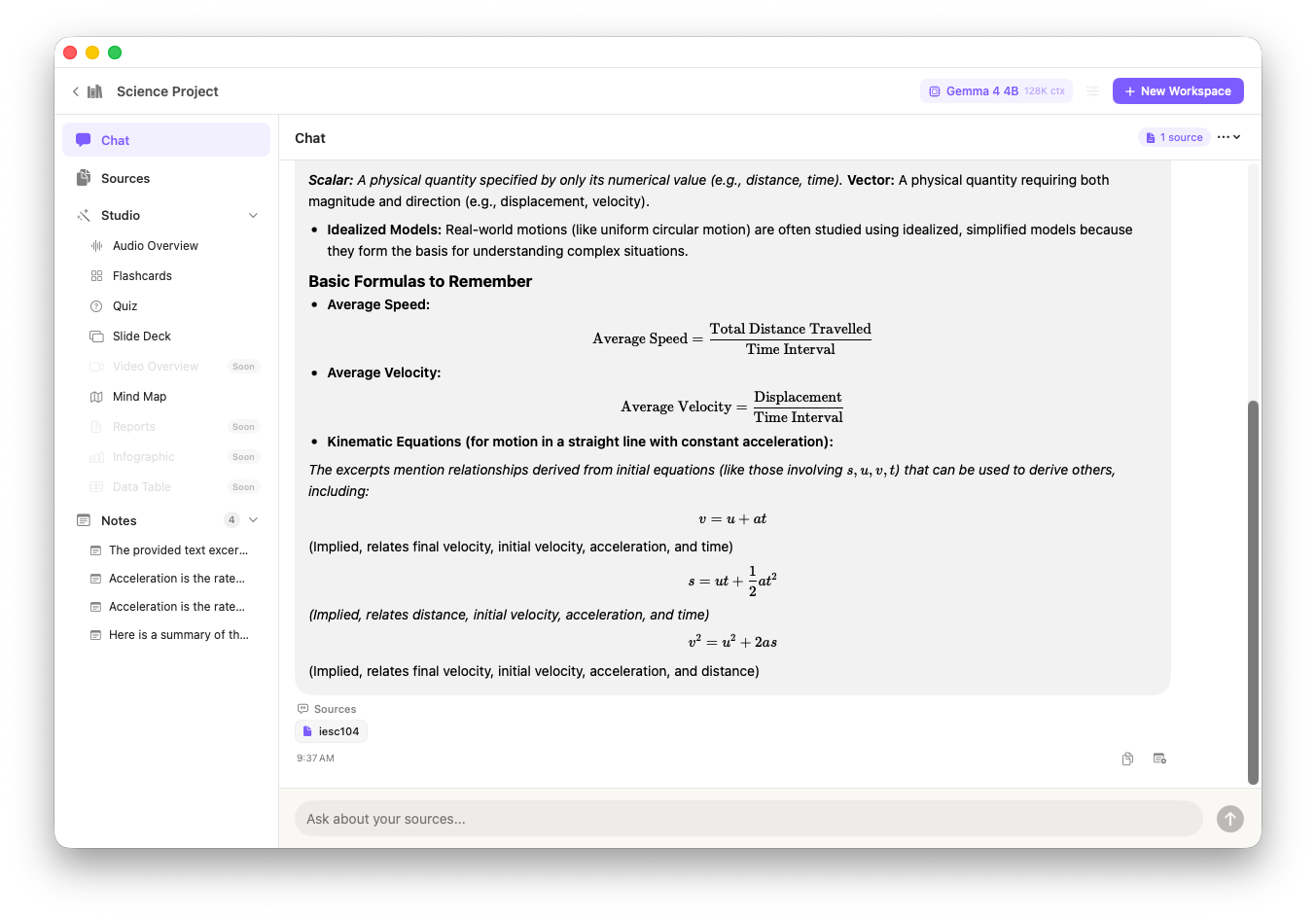

EQBook is a NotebookLM-style AI workspace that runs 100% on your device. Add PDFs, documents, audio and video — then ask questions and get cited answers from locally-run Gemma 4 models. No cloud. No accounts. Your data never leaves.

Free · All inference on-device · No account required